Continued Monitoring of the Situation

Birbs, week two — what the system got wrong, four times, and what came back from the dead

Follow-up to "Monitoring the Situation — The Internet of Birbs"

When I hit publish on the birbs post last Wednesday, I described an "AI-powered nest monitor" with a straight face. The pipeline was wired, the cron was running, and Gemini Flash was confidently producing structured JSON about a bird in a cup. Beautiful.

By Thursday morning, 36 clips were stranded on a Raspberry Pi, the Wyze cam I had retired in past tense in the post was back from the grave, and the AI had quietly decided that the female House Finch was a male and was telling me, in steady well-formatted prose, that the male had been incubating five eggs all week.

He hadn't been.

I caught that one. Then I caught two more like it. Then I rewrote the whole pipeline's prompt and re-ran the entire archive from scratch. Then I went looking, because someone smarter than the model told me to, and found one more bias of the same shape, and re-ran the entire archive again.

So this is the recap. Nine days reclassified, five hundred-plus clips re-described, three drafts of this post that turned out to be wrong on the central facts before this one. The pipeline finally agrees with the live view at 6 a.m.

What the birbs actually did

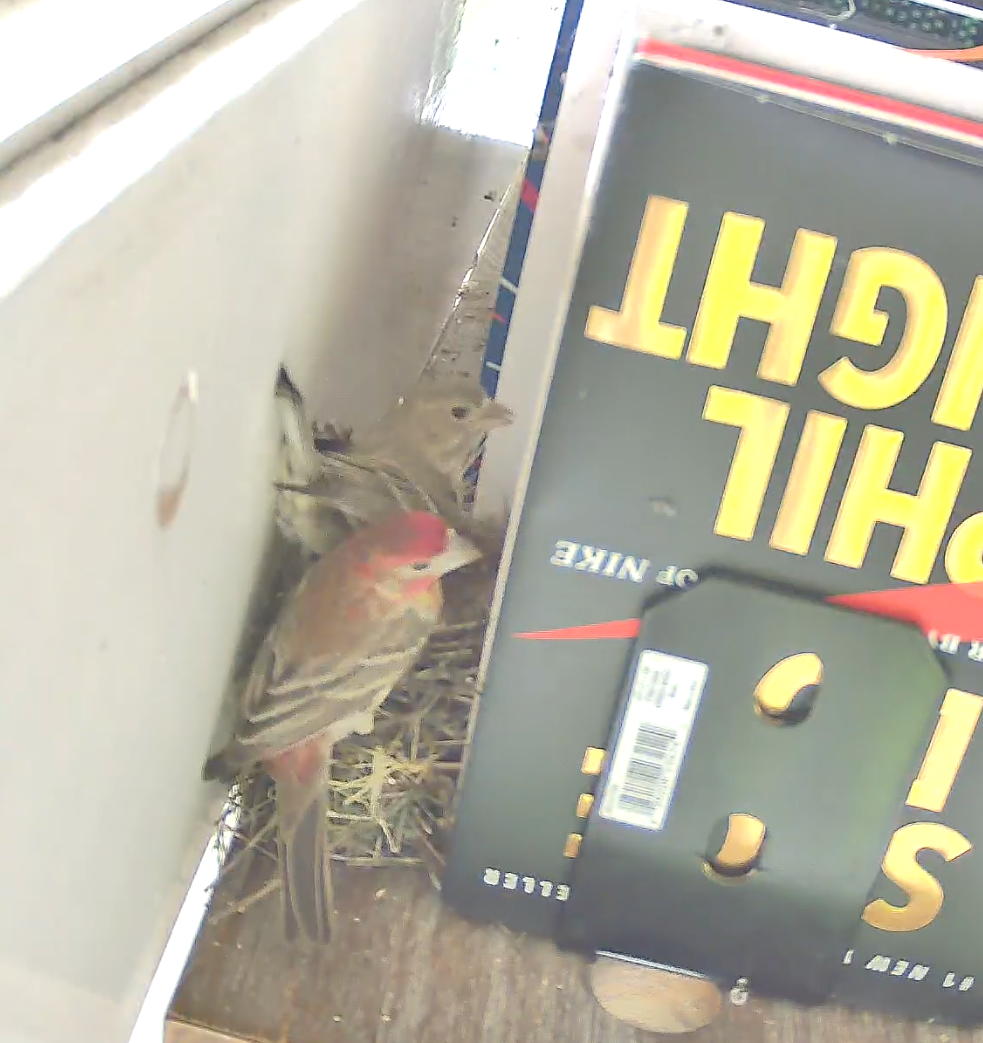

Five eggs in the cup, hatch window 9–12 May, roughly six to nine days out as I write this. The female is doing all the right things: present at dawn, present overnight, present at dusk, body settled low in the cup, head tucked. Standard incubation behavior straight out of every reference I can find.

The clutch on 25 April. She kept laying for another day or two; the count is now five and steady.

A few honest observations from the week, now that I have a pipeline whose summaries match what I see with my own eyes:

- Clutch stable at five eggs. The original post said two; that's what was visible on 25 April when I first peeked behind the books. She kept laying through 27 or 28 April, and the count's been five and steady ever since.

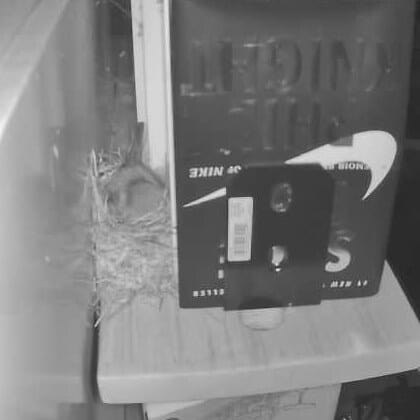

- She's on the nest near-continuously overnight. Across every reclassified day in the dataset, the count of "the cup is empty between 21:00 and 06:00" reads as zero. She shifts position, she settles deeper, she occasionally tucks so far in that all you see is a low-contrast brown lump in IR — but she does not get up and leave. (My pipeline disagreed with this for several days. I disagreed back. More on that below.)

- The male does courtship feeding all day, every day. This is a documented House Finch behavior that the pipeline had completely missed, but that I had observed in real-time a number of times: during incubation, the male flies to the nest several times a day with food in his beak and feeds the brooding female in place at the rim of the cup. He does not enter the cup, he does not relieve her, he does not perch decoratively — he is delivering food. Across the corrected nine-day archive, the pipeline now counts about sixty of these visits — three to fifteen per day, depending on weather, time, and how often the female steps off to forage. The cuteness of this part and the reason I'd seen it live a few times is that they pair also take the these opportunities to have a nice little chat to each other, and my bedroom happens to be next door to the office.

- Daytime breaks happen but they're short and few. The female does take occasional foraging breaks during the day. I like to think of this as a "leg stretch", but the studies are adamant that she's going and feeding for herself ofr a bit. Most are 15-30 minutes; the longest in the dataset is the three-hour stretch on 26 April when she was still finishing her clutch and the eggs hadn't started incubating in earnest. Once full-time incubation kicked in, the daytime breaks shortened.

- The bird tolerates humans in the room. I cleaned the sunroom in the middle of one of the busiest morning feeding windows and she did not flinch. The few times I've gone in to adjust the camera or some such, she'll fly out of the room and perch on a tree branch just outside keeping an eye out. If I'm taking too long, she'll fly back in and announce her presence... This has happened twice and it definitely has a "hey, hurry up already" vibe to it. In general though, we've made peace with each other.

That last bullet is, on reflection, probably the most important fact about these birds: Someone who saw the original post let me know that this is very common for House Finches. A part of their nesting strategy is to get as close to humans as possible (without endangerng their clutch) to keep out of reach of hawks, bluejays, and other predators.

Reolink IR, 22:37 PT on 28 April. The female sits low in the cup. At this resolution, in monochrome, with no color information, you cannot — just by looking — tell which bird this is, or even reliably tell that there is a bird at all. The model couldn't either. That turns out to matter, three different ways.

Four biases, one fix pattern

The pipeline produced four categories of bad daily summary across this week, and they all had the same shape.

Bias 1 — the brown blob is a male. Gemini, looking at the brooding female in IR or in low-light daylight, would default bird_sex: male. Why? Because the structural prior the model had was small bird sitting on a nest, which pattern-matches to "the parent that incubates," which the prompt had told it differentially is "female" or "male" based on color cues that were absent from the clip. When color is absent, the model defaulted to whatever its internal "small bird on a nest" stereotype was, which is apparently, somewhere in its training distribution, a male House Finch. House Finch incubation is, per every reference I can find, exclusively female — but that's a behavioral fact, not a visual one, and the prompt wasn't using it as a constraint.

So the per-clip JSONs were full of bird_sex: male claims about a bird that was almost certainly the female. The day-level summarizer (Gemini 2.5 Pro) read those JSONs at face value and produced beautifully written daily entries about "the male's repeated occupation of the cup" and "highly atypical behavior for a male House Finch." I read those entries with my coffee. I considered emailing an ornithology lab.

Bias 2 — the brown lump in IR is an empty cup. The female at night, with her head tucked, presents as a low-contrast, rounded shape in the dried-grass cup. Gemini in monochrome interpreted that as "the cup looks empty" in roughly a third of overnight clips. The day summaries duly reported that "the female cycled on and off in 15–45 minute increments through the entire night, leaving five exposed eggs visible on IR." Casey reading this in the morning: "…that's not what I'm seeing in the live view at 6 a.m." It wasn't. She'd been there the whole time.

Bias 3 — the male visiting the nest is just a perch. Gemini, looking at clips where the male arrives at the nest while the female is on the cup, defaulted to a literal description: "male perched at the rim, female incubating." Which is technically what's in the frame. What's actually happening is courtship feeding — the male is delivering food to her in beak — and the prompt didn't tell the model to potentially interpret it that way. So the day summaries called these moments "visits" or "reliefs" instead of feedings, and the most striking behavior in the dataset was effectively invisible.

Bias 4 — courtship feeding only counts when the female is on the cup. This one I missed on the first pass. The "courtship feeding default" I added to fix bias 3 said, in effect, "if you see the male near the cup while the female is on it, default to feeding." That worked great when the female was visibly on the cup. It did not work when she had stepped off for thirty seconds — the male would arrive at the empty rim looking for her, the model would dutifully report "male perched at empty nest cup," and the day summary would call it nothing at all. I caught this looking at the corrected data: "there's still feedings being missed." He was right. The behavior is so reliable during incubation that any visit by the male to the nest area should default to courtship feeding, regardless of whether the female is in frame. Same prompt fix, fourth time: name the bias, write the default, redo the archive.

All four biases have the same shape: the prompt was missing a behavioral default that would constrain the model away from a misleading visual-literal read. Once I named the bias, the fix wrote itself:

- "House Finch incubation is exclusively female. Males do not sit on eggs. If the bird is sitting in the cup and you cannot clearly see red plumage, default

bird_sex: female." - "Between 21:00 and 06:00, the brooding female is on the nest essentially continuously. Do not label any clip in this window

empty_nestunless the cup is unambiguously empty across every frame." - "House Finch males do not incubate, but they routinely perform courtship feeding of the incubating female. When the male is at or near the cup — regardless of whether the female is in frame — the default interpretation is

tag: feedingwith notable:courtship feeding — male visiting incubating female's nest."

I added all of it to the prompt as a single "Behavioral defaults (CRITICAL)" block, then re-ran the entire archive of footage from scratch — every clip from 25 April onward, re-described against the corrected prompt, every day re-summarized. The post you're reading is the fourth draft because the previous three were built on data the model was confidently wrong about, in slightly different ways each time.

A note on what this isn't: it's not a stronger model. While debugging I went and looked at whether a frontier Flash variant would help. The doc page I read claimed gemini-3.1-flash-preview existed; the API politely informed me that it does not. (The actual frontier preview is gemini-3-flash-preview, which is what I ended up wiring as an optional override.) The current production model — gemini-2.5-flash — was completely fine once the prompt told it the right defaults. The mis-id was never a perception problem. Gemini could see the bird, count the eggs, describe the scene. It was a constraint problem. A frontier model with the same loose prompt would have produced the same three biases, because color-blind sexing-by-incubation is a perceptual prior, not a perception failure.

The lesson, which I am writing down so I don't have to learn it again: when an AI is confidently wrong in a systematic way, the bug is probably in your prompt, not in your model.

What broke immediately after the post went up

The published post described "two parallel camera.record actions" firing on every motion event — one to the Pi's /media/, one to the NAS via CIFS, full redundancy, fault-tolerant, etc. This was true in the YAML. It was not true at runtime.

Three things, in sequence:

1. The NAS share was silently rejecting HA's writes, and HA's camera.record swallows the error. A permissions mismatch between the SMB share and the user that Home Assistant's CIFS mount authenticates as meant every NAS write quietly failed. HA logged nothing, because camera.record does not raise on storage errors — the recording gets to "I would like to write this file" and then quietly does not. From 28 April 21:21 PT to 30 April 09:24 PT, the Pi had clips and the NAS did not. The pipeline still ran every night; daily-bird-story.ts summarized the empty NAS dir and reported "no events." Which, helpfully, is also what the script would say if the birds had abandoned the nest. So that was a fun couple of days. Fix: realign the share's ownership with what HA's mount expects, then backfill the 36 stranded clips off the Pi.

2. The two parallel camera.record calls don't actually run in parallel. HA's stream worker is a per-camera singleton. Fire two camera.record actions on the same camera at the same time and the second one dies with Stream already recording. So in the moments where the NAS was writable, only one of the two writes was actually landing — and which one won depended on which call arrived first. The fix is unglamorous: write to the NAS first, then delay: 33s, then a shell_command that copies the file from the NAS mount over to the Pi. Same dual-copy redundancy. No race.

3. HA's camera.record re-encodes through the stream worker, and the output bitrate is roughly 1/12 of the source. A 30-second motion clip from the Reolink E1 Pro is ~12 MB at the source; HA's recorded version is ~1 MB. For "is there a bird in this frame" Gemini doesn't notice. For anything I want to keep — the eventual hatching footage in particular — I'd like the full-bitrate originals. So I wrote reolink-sync.ts: it logs into the Reolink directly, lists the camera's own SD-card recordings, dedupes against what's already on the NAS within ±15 s (NAS HA-recorded filenames lag SD-recorded events by 10–11 s thanks to pre-buffer + HA fire delay), and pulls the full-res originals over scp. Runs every 30 minutes via launchd.

So that's three of the post's bullet points either subtly wrong or quietly broken in the day after publication. Cool, cool, cool.

And then the Wyze came back

I had retired the Wyze cam in the post. I described it in past tense: "the Wyze gave us our first real look at the female sitting low in the cup." The Wyze firmware and RTSP ecosystem is pretty hostile to anything other than "log in to the website and pay the subscription fee" usage (including DMCA takedowns against right-to-repair researchers... tsk-tsk) so the shenanigans combined with my "don't buy another camera" scarcity contraint ended up with me retiring it.

I called that one too soon. It is, once again, present tense.

Wyze close-up at 06:16 on 27 April. The female low in the cup, at a crop the Reolink at this distance simply cannot reproduce. This is what the Wyze is back to give us when the chicks arrive.

The Wyze has two things going for it that the Reolink does not at this position: a closer crop (the nest fills a meaningful chunk of the frame instead of being a small region inside a wider scene), and a hard-burned OSD timestamp on every frame. For training-set-quality footage of the actual hatching, those two things matter more than I'd given them credit for. So this week PAI and I wrote wyze-sync.ts, which is — and I want to be clear about this — unreasonably annoying to write.

Wyze v4 firmware does not expose RTSP. The only way to retrieve motion clips is through Wyze's cloud, which serves them as DASH manifests guarded by AWS Kinesis Video Streams session tokens that expire in 5–15 minutes. Wyze's system has no API, so this is ghetto scripted cookie-snarfing, AI-style.

The auth flow, roughly:

- POST to

auth.wyze.com/oauth/tokenwith email + MD5(password) + a TOTP code if 2FA is on. - POST to

/app/v4/device/get-event-listfor motion events in the search window. - For each event, POST to

/app/v4/replay_urlto get the signed DASH manifest. - Hand the manifest to

ffmpegimmediately and mux the segments to a local.mp4before the token expires. scpto the NAS aswyze_clip_YYYYMMDD_HHMMSS.mp4sodaily-bird-story.tspicks it up alongside the Reolink clips.

The token-refresh logic alone is its own script (wyze-token-refresh.ts), because the access token expires every ~24 hours and I do not want to be paged at 04:00 PT to manually log into Wyze's auth flow because the chicks' close-up footage stopped streaming. Cam Plus is required (free-tier accounts get an empty array back from replay_url). The endpoints aren't documented; I sniffed them out of the web app's network tab.

This is a lot of scaffolding for a second camera angle on five eggs. I am aware. It's also fairly brittle and I'm quietly hoping no-one from Wyze reads this. None of this had to be useful — it just had to be possible.

(Bonus pipeline papercut: Wyze clips are exactly two seconds long and full-range YUV; ffmpeg's mjpeg encoder, running with default flags, refuses both. Two one-line fixes — -pix_fmt yuvj420p to convert to JPEG-compatible YUV, and clamping the frame-extraction seek time to dur - 0.1 so a 2-second clip doesn't get sampled at exactly t=2 — and the keyframe pipeline ate them happily. None of this was visible from the docs.)

A few smaller things I added this week

- 15-minute interval recording. A second HA automation that fires on

time_pattern: minutes: '/15'and records a 30-second heartbeat clip regardless of motion. Mostly so I have the night-time footage I need to answer "is the bird actually on the eggs from 21:00 to 06:00, or is she just visiting," which motion-only clips can't answer. (And which, helpfully, was also the data that proved the model's "she leaves at night" reads were wrong.) sleep-wake-detect.ts. Walks every interval clip between 21:00 and 06:00 and asks Gemini Flash whether each visible bird's eye is open or closed and whether the head is tucked. Outputs a per-clip JSON withsleep_confidence,awake_confidence, and a multi-frame agreement score. Gives me a timeline of the night I can scan in five seconds. Sadly, this was the data point that proke with the HAOS/CIFS issues, but we've firmed that up and should get a look at it on tonights run.- Three morning emails. The naturalist's narrative (yesterday's prose entry from Claude, freshly regenerated against the corrected per-clip data), the most-interesting-moment of the day (top-scored clip with the keyframe attached, deduped via a state file so the same scene doesn't get emailed twice), and the sleep-wake timeline. They all land in my inbox at 07:00 PT.

- Project consolidated to

~/Projects/birbs/. When the post went up, the code lived in three different directories, two of which had different opinions about which model to call for which step. Now there's one repo, one cron set, one README, one source of truth (both for me, and for PAI when it goes to make adjustments... Everything designed for both an AI and a human audience is Markdown). - Per-clip model behind an env var.

BIRBS_FLASH_MODELandBIRBS_PRO_MODEL, defaulting to the production-stablegemini-2.5-flashandgemini-2.5-pro. The frontier preview (gemini-3-flash-preview, real this time) is a one-env-var swap away when chicks arrive and the workload changes.

Where this lands

Hatch window opens in six days. As of right now I have two cameras (one of which I had to socially engineer Wyze's cloud API to bring back), a NAS that actually accepts writes, two HA automations that don't race each other, a five-script pipeline that costs about a dollar a day to run, three morning emails wired into a Gmail send, and a vision prompt that no longer thinks the male is doing all the work, that the female is taking ten-hour breaks at night, that he's standing around decoratively when he visits, or that an empty nest with a male perched on the rim is a non-event.

The original post said the cost of weird, personal AI projects is tiny and the iteration is fast. I'll report back: I'll happily admit that a little time has been invested in firming up this pipeline over the past few days, but overall the cost is still tiny. The iteration was not fast — but the parts that hard-broke after publication weren't the AI parts. They were SMB perms, an HA stream-worker race condition, a cloud-camera vendor who decided RTSP was somebody else's problem, and ffmpeg silently refusing to encode the YUV that Wyze ships. A general design anti-pattern that I'd introduced was silent failure: there are now consistent checks, heartbeats, and emailed alerts firing so that if something else goes bump in the night the problem comes to me instead of me going to it. In hindsight, this was a really simple oversight on my part, but this resilience work is hopefully going to set things up to capture the hatchlings without a hitch.

Most of those are infrastructure problems older than I am. The soft-breaks were the prompt problems, because they look like model failures and aren't, and because each one was visible only after the previous one had been fixed.

Meanwhile, if nature continues to take it's course, we'll know in a week what the chicks look like. The pipeline is (more) ready for them, the prompt has been beaten into shape, and the male — contrary to a brief week of false impressions — is unlikely to be the one feeding them. He'll be busy bringing food to her while she does it.