"Monitoring the Situation" - The Internet of Birbs

Two pale-blue speckled eggs on the sunroom bookshelf turned into three cameras, an Unraid NAS, two AI models, and a journal that writes itself every morning. None of it had to be useful — it just had to be possible. Because joy.

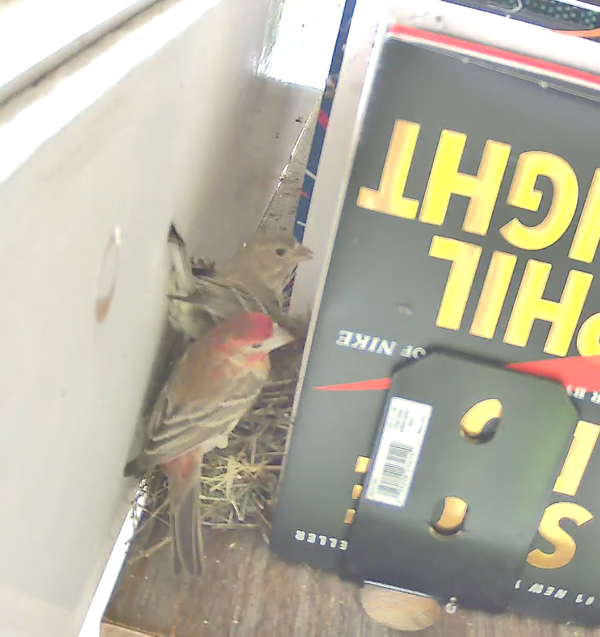

About a week and a half ago I was working in my home office (a sunroom where the windows are often left open), when I noticed that two particular birds kept on trying to fly inside. If I left the office for any decent period of time, when I came back in the brown one in the pair would appear from behind a mounted bookshelf and promptly scarper itself out the window. Then a red one would appear, fly around a little on what appeared to be a recon run, and fly off until I was gone.

When the same thing happened the following day, I got curious and took a look behind the books...

Two pale-blue, speckled eggs sitting in a small cup of dried grass on my sunroom bookshelf, tucked against the spine of Shoe Dog. House Finches. Specifically: a female who'd quietly built a nest behind a Phil Knight memoir over the course of a week, and her partner with the bright red head who keeps showing up to drop off snacks.

Aside from it being insanely cute and a good reminder to clean my office, like any good 30+ year old I promptly became fascinated by my new friends, their little start-up incubator, and the process. So, as anyone too stubborn to buy a dedicated nest monitoring camera would, I cobbled together a hilariously overengineered AI-powered system to watch them and take notes on the progress.

It's been a nice little palate-cleanser. The whole exercise is about learning stuff, learning about my new friends, and a reminder that the most fun use of a fast-moving toolchain isn't always the most ambitious one. None of this had to be useful — it just had to be possible. That's the post.

Setup

A few of the eggs were already there before I started any of this — that's just how it goes with finches, they pick a spot and commit (a fun realization along the way was that momma was still in the process of laying her clutch when I discovered all of this). My job was to watch without disturbing them.

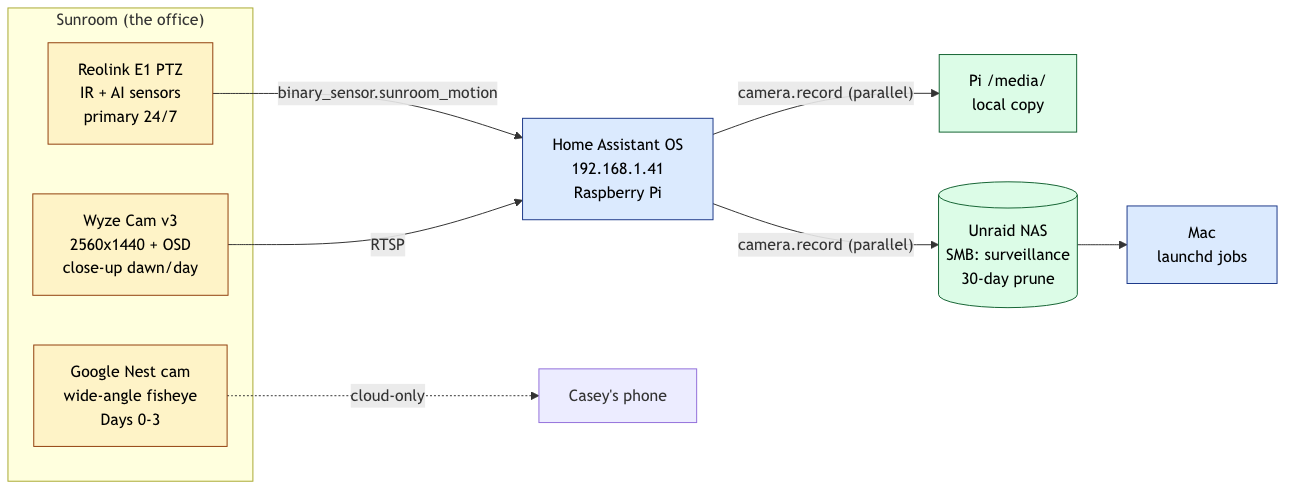

Specs, for the build-replication crowd:

- Cameras: Google Nest (existing wide-angle), Wyze Cam v3 ("Sunroom Wyze", 2560×1440, OSD timestamp burned in), Reolink E1-series PTZ (IR, AI sensors, on LAN — now the primary).

- Hub: Home Assistant OS 2026.4.4 on a Raspberry Pi, Reolink integration via config flow.

- Storage: Unraid NAS, SMB share

surveillance, dual-write from HA on motion (Pi/media/+ NAS via Supervisor CIFS), 30-day prune cron. - Compute: Mac Mini on launchd —

daily-bird-story.ts22:30,morning-bird-narrative.ts07:00. - Models: Gemini 2.5 Flash (per-clip vision tagging), Gemini 2.5 Pro (day-stack journal entry), Claude (next-morning naturalist's prose).

- Glue:

bun,ssh,rsync,ffmpeg. Around 400 lines of TypeScript. - Cost: under a dollar a day at current motion volume.

The cameras stack up like layers of an archaeological dig, I constrained that part on purpose:

- Google Nest cam — wide-angle fisheye, already in the sunroom for general "what's happening in here" purposes. Saw the nest from far away. Got us through Days 0–3.

- Wyze cam — added later, much closer crop, 2560×1440, with a clean OSD timestamp burned into every frame. The Wyze gave us our first real look at the female sitting low in the cup.

- Reolink PTZ — installed yesterday as the long-term watcher. IR-capable for overnight, exposes motion and AI sensors over LAN, plays nicely with Home Assistant. Now the primary and only one.

All three cameras' clips eventually land on a single Unraid box, in an SMB share called surveillance. There's an HA automation that, on binary_sensor.sunroom_motion → on, fires two parallel camera.record actions — one writing to the Pi's /media/ and one to the NAS share via a Supervisor CIFS mount, so I always have two copies. A daily cron prunes the NAS share at 30 days. The whole thing took a few hours to wire up (using Daniel Miessler's PAI as my AI-powered development harness) and is mostly held together with ssh, bun, and ffmpeg.

So the data pipeline is fine. The interesting part is what happens to the clips after they land.

The pipeline

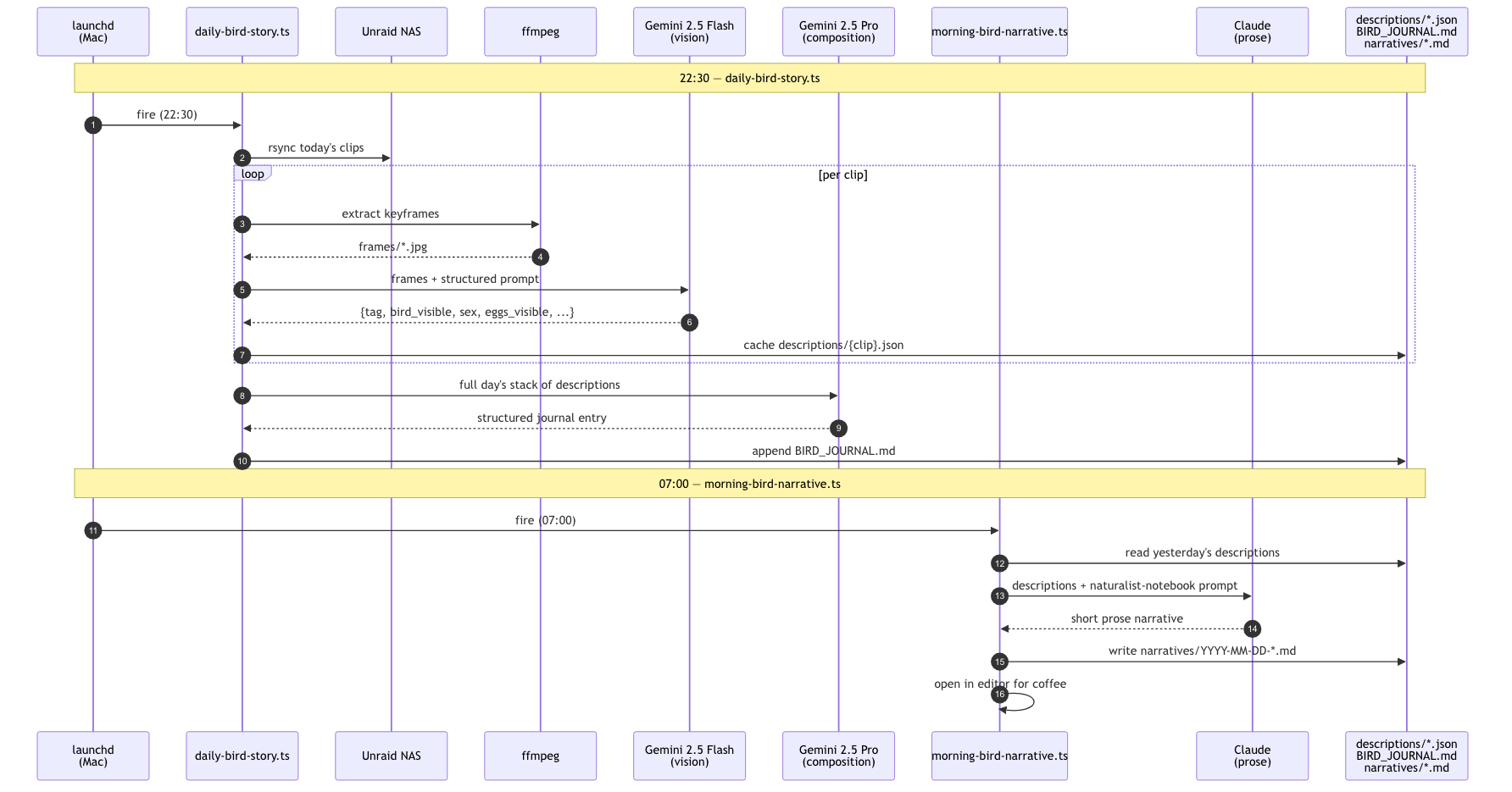

Two scheduled jobs run on my Mac via launchd:

22:30 — daily-bird-story.ts

For every clip recorded that day, it:

- Pulls the clip down from the NAS via

rsync. - Extracts a handful of keyframes with

ffmpeg. - Sends those frames to Gemini 2.5 Flash with a prompt that asks for a structured JSON description:

{tag, bird_visible, bird_present_at_any_point, bird_sex, eggs_visible, egg_count, description, notable}. - Caches that JSON to disk per clip.

- Hands the whole day's stack of descriptions to Gemini 2.5 Pro to compose a structured journal entry, scene-by-scene, and appends it to

BIRD_JOURNAL.md.

07:00 the next morning — morning-bird-narrative.ts

Reads the previous day's cached descriptions and asks Claude to write a short narrative — a working naturalist's notebook entry. Saves to a markdown file and opens it in Sublime so I see it when I sit down with coffee.

Three models, three jobs.

Gemini 2.5 Flash for per-clip vision. The hot path is "look at five frames, tell me if there's a brown blob in the cup." That's a high-volume, low-stakes vision task — hundreds of calls a day. Flash is cheap enough that getting it wrong on a clip costs less than a cent, fast enough to chew through a day's clips in a couple of minutes, and surprisingly good at "is the bird present, and if so, is it the brown one or the red one." Pro would be overkill; Claude vision would be more expensive for no gain at this scale.

Gemini 2.5 Pro for the day-level composition. Once Flash has emitted ~80 little JSON descriptions for the day, somebody has to read all of them and write a structured "what happened today, in scenes" entry. That's a longer-context structured-writing job — exactly Pro's wheelhouse. One call per day, so the price difference vs. Flash is irrelevant.

Claude for the morning narrative. Different job again — short prose with strong tone control. "Working naturalist's notebook, no purple prose, no Jane Austen impressions, just what happened." Claude is the model I have the best feel for on tone, and it's already on subscription credit. The output is the thing I actually read with coffee, so this is where the prose model earns its keep.

Total cost is under a dollar a day at current motion volume. The whole pipeline rides on subscription credit and Gemini's free-tier-adjacent pricing. That's the part of the story that would have been impossible two years ago.

The optimization journey

The first version was hilariously bad in a specific way.

Initial run, looking back through three days of footage: about a quarter of the clips had wrong tags, and the summary of the day was full of flowery language like "as the soft glow of dawn crept into the night sky" which, as a matter of personal preference, I find really irritating. Gemini was confidently calling clips incubating when the bird actually arrived in the last frame ("the nest is empty in the first two frames..."), and calling clips empty_nest when the bird was visible but the wide-angle Nest cam had compressed her down to a 30-pixel brown smudge in a 1920×1080 fisheye.

Two fixes:

-

Transition handling in the prompt. Old version said "pick one tag." New version says: "if the bird's presence changes across frames, tag this

relief— even if the bird is on the cup at the very start or very end." Tag distribution flipped:reliefwent 12 → 34,incubatingfalse-positives went 34 → 18. -

Sex differentiation. Once I told the model that the female is uniformly brown and the male has a red head/throat, it could distinguish foraging visits (her) from food deliveries (him). The 27 Apr narrative now correctly notes "first male recorded near the nest, around 10:17" — a thing I would have missed by eye.

The trick that made the iteration cycle fast: I asked for a calibration frame. "For the purpose of calibration, the female is currently sitting on the nest." I grabbed a snapshot from the Reolink right then, sent it to Gemini with the new prompt, and got back {tag: incubating, bird_sex: female, bird_visible: true}. Ground truth confirmed; ship it.

What I'd say about all this

A few years ago this would have been a weekend project that ended at "buy the dedicated nest cam." A year ago it would have been a weekend project that ended at "set up the camera, manually scrub the footage." Today it's a Tuesday afternoon with a couple of TypeScript files, a few hundred Gemini Flash calls (less than a coffee), and a Claude subscription I already had.

That's the whole post. The birds aren't really the point, and the pipeline isn't the point — the fact that I could build this for fun, in an afternoon is the point.

The five eggs are due to hatch somewhere between 11–14 May. When that happens the tag distribution will shift dramatically — feeding visits will jump from one every 90 minutes to one every 15 minutes. The prompt already has a feeding tag waiting for them. Meanwhile, I've learned enough along the way to have a decent idea of how to care for (and, as it turns out, mostly avoid) them over the next month or so, while getting the opportunity to observe and enjoy the process at the same time.

I guess the exhortation here is this: Whatever weird, personal use of AI you've been quietly thinking about: go try to build it. The cost is tiny, the iteration is fast, and it doesn't have to be a grind. Sometimes it just watches a bird sit on a nest and tells you what happened while you slept. There is joy in exploring.